Anthropic Sues Pentagon Over AI Ban Following Refusal for Weapons Use

Anthropic is challenging the US Department of Defense's designation of the company as a supply chain risk in federal court. The lawsuit stems from Anthropic's refusal to allow its Claude AI for autonomous weapons and mass surveillance. A hearing occurred on Tuesday in a northern California district court seeking a temporary injunction.

Substrate placeholder — needs review · Wikimedia Commons (CC BY-SA 3.0)

Substrate placeholder — needs review · Wikimedia Commons (CC BY-SA 3.0)Hearing on AI Ban Anthropic appeared in federal court against the US Department of Defense on Tuesday afternoon.

The artificial intelligence company seeks a temporary injunction to pause the government's ban on its technology for military and contractor use. The hearing took place in a northern California district court. The dispute arises from Anthropic's refusal to permit its Claude AI chatbot for domestic mass surveillance and fully autonomous lethal weapons systems.

The company filed the lawsuit earlier this month after the Defense Secretary labeled it a supply chain risk. Anthropic alleges the designation will cause irreparable harm.

The Pentagon maintains the ban protects national security interests. No immediate ruling was issued from the hearing.

the Dispute The conflict escalated after Anthropic declined to adapt its AI for military applications involving lethal autonomy.

The company has publicly stated its commitment to safe AI development excluding harmful uses. The Pentagon's decision affects federal agencies and contractors reliant on Anthropic's models for non-weapons tasks. The order prohibits procurement and use of Anthropic products across government operations.

The declaration frames the company as a risk due to its policies. Anthropic contests this as discriminatory against ethical AI providers. The lawsuit represents an early legal test of government authority over private AI firms' usage restrictions.

Proceedings are ongoing, with potential for broader implications on AI governance.

A successful injunction could restore access to Anthropic's technology for government users.

Failure might deter other AI companies from similar ethical stances. The case highlights tensions between national security and AI safety principles. Industry observers note the ban could shift market dynamics for AI providers.

Anthropic's Claude models are used in various sectors beyond defense. Resolution may influence future regulations on AI deployment.

Key Facts

Story Timeline

5 events- Mar 24, 2026

Anthropic and Pentagon representatives appear in federal court for hearing on temporary injunction.

1 sourceThe Guardian - Earlier March 2026

Anthropic files lawsuit against Defense Department after supply chain risk designation.

1 sourceThe Guardian - Recent weeks

President Trump orders US agencies to stop using Anthropic's AI tools.

1 sourceThe Guardian - Prior to March 2026

Defense Secretary Pete Hegseth declares Anthropic a supply chain risk due to AI usage refusals.

1 sourceThe Guardian - Ongoing

Anthropic refuses to allow Claude AI for autonomous weapons and mass surveillance.

1 sourceThe Guardian

Potential Impact

- 01

US military contractors lose access to Claude AI for non-lethal applications.

- 02

Court ruling sets precedent for government oversight of AI usage terms.

- 03

Anthropic experiences revenue decline from federal contracts.

- 04

Other AI firms adopt stricter ethical policies to avoid similar bans.

Transparency Panel

Related Stories

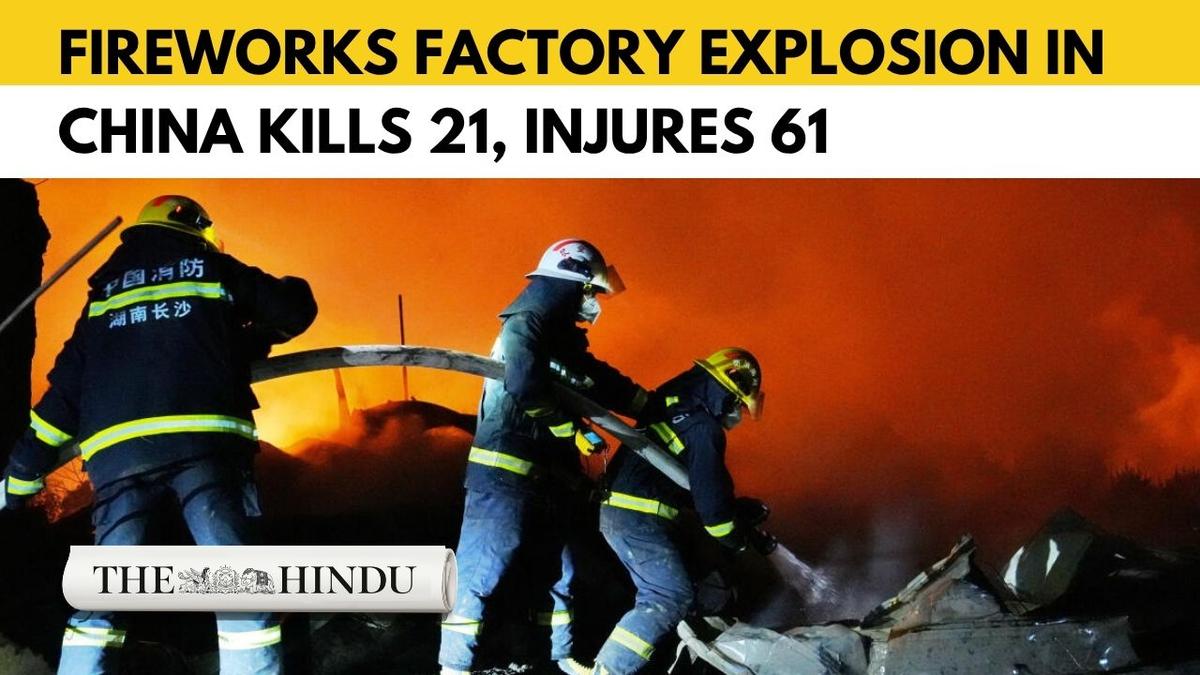

thehindu.com

thehindu.comExplosion at China Fireworks Factory Kills 26 and Injures 61 in Hunan Province

An explosion at the Huasheng Fireworks Manufacturing and Display Company in Liuyang city, Hunan province, killed at least 26 people and injured 61 on Monday afternoon. Rescue operations have concluded, with authorities detaining company staff and halting all local fireworks produ…

io9.gizmodo.com

io9.gizmodo.comHantavirus Outbreak on MV Hondius Cruise Ship Prompts Three Evacuations and Monitoring

Eight cases of hantavirus, including three deaths, have been linked to passengers on the MV Hondius. The ship remains anchored off Cape Verde with about 150 people aboard while health officials conduct contact tracing and plan further screening in the Canary Islands.

972mag.com

972mag.comADL Audit: Antisemitic Incidents Drop 33% in 2025, But Physical Assaults Hit Record High and Three Killed

The Anti-Defamation League released its annual audit on May 6, 2026, documenting a sharp decline in overall antisemitic incidents across the United States during 2025. Physical assaults reached record levels with more than 300 victims and three deaths, the first such fatalities s…