Robot Navigation Strategy Based on Honeybee Learning Flights Proposed in Nature Paper

A research paper published in Nature proposes Bee-Nav, which trains a tiny neural network during short robotic learning flights to enable efficient visual homing. Real-world tests with a small drone achieved high success rates over distances up to 600 meters using neural networks as small as 3.4 kB.

interestingengineering.com

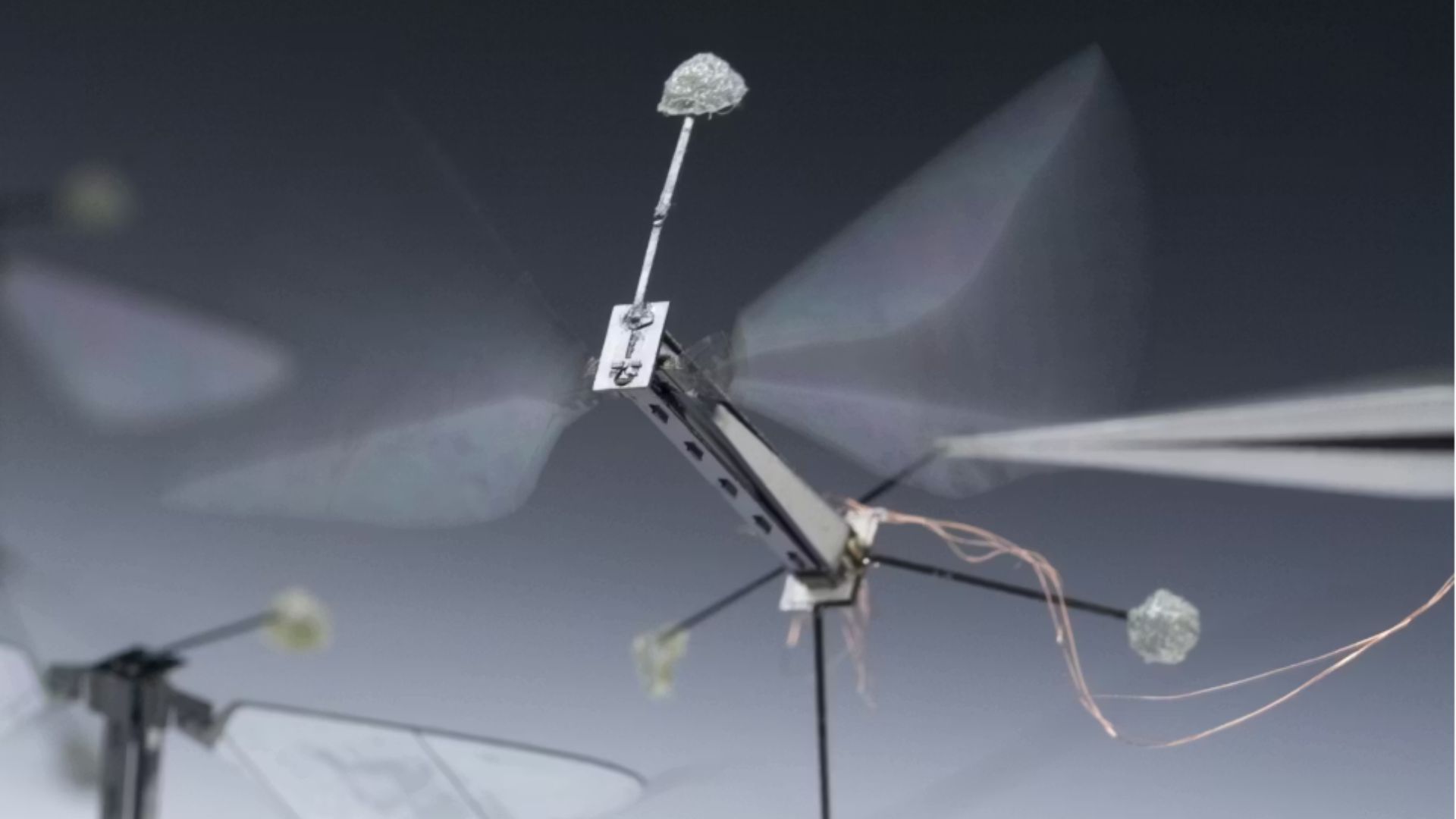

interestingengineering.comA research paper titled 'Efficient robot navigation inspired by honeybee learning flights' was published in Nature. The paper proposes a navigation strategy named Bee-Nav inspired by the visual learning flights of honeybees. Bee-Nav uses a tiny neural network trained during robotic learning flights to map omnidirectional images to a home vector based on path integration.

Honeybees perform one to several short learning flights before departing on longer exploration or foraging trips. The learned homing area, or LHA, is the area circumscribing the learning flight trajectories within which the neural network can estimate the home location. 00% of the total flight area.

24%. 6%.

4%. The learning flight trajectory fits in a 10-m-radius circle around the home location. 5 m of home for 100% of 30–110-m flights.

5 m of home for 70% of 200–600-m flights in windy conditions using a 42-kB neural network. 3-kB attention neural network.

Visual homing success rate of Bee-Nav was 100% within the LHA radius in ten different simulated forests. 5 times the LHA radius depending on the environment. Within the LHA the angular errors of the network were smaller than about 40°.

Visual homing is generally successful if angular errors stay below 90°. Bee-Nav visual homing performed substantially better than a snapshot-based approach and a perfect memory approach that stored all learning flight images.

Path integration for the robot is based on velocity from optical flow and laser-based height measurements and heading based on gyro integration. The view memory consists of a small feedforward convolutional neural network that maps an unwrapped omnidirectional image to a home vector. A recent robotics study used a 9-kB neural network for visual homing up to 18 m outdoors.

The state of the art prior to this paper was a tiny flying robot using 500 kB of memory for navigating in a 4 × 5-m area. Honeybees can navigate up to several kilometres from their hive.

Key Facts

Story Timeline

3 events- 2026-05-13

Research paper 'Efficient robot navigation inspired by honeybee learning flights' published in Nature

1 sourceNature - Prior to publication

Simulations conducted showing LHA requirements for different path integration noise levels

1 sourceNature research paper - Prior to publication

Real-world indoor, outdoor and windy condition experiments performed with small drone

1 sourceNature research paper

Potential Impact

- 01

Reduces memory requirements from 500 kB state-of-the-art to as little as 3.4 kB for visual homing

- 02

Enables long-range navigation for resource-constrained tiny flying robots previously limited to small areas

- 03

Provides new perspectives on neuroethology of insect navigation including visual learning and cognitive maps

Transparency Panel

Related Stories

thezvi.wordpress.com (News photo)

thezvi.wordpress.com (News photo)Anthropic in Talks to Raise Tens of Billions at $950 Billion Valuation

Anthropic is in talks to raise tens of billions of dollars in a funding round that would value the company at $950 billion, surpassing OpenAI's $854 billion valuation from March. The AI developer has quadrupled its market share among business customers since May 2025 and released…

newser.com

newser.comAnthropic in Talks to Raise Funding at $950 Billion Valuation

Anthropic is in discussions to raise funding that would value the artificial intelligence start-up at $950 billion. The company was previously valued at $380 billion. It recently released a new AI model called Mythos and is separately in a dispute with the Pentagon.

Rest of World

Rest of WorldTrump and Xi to Discuss AI, Chip Exports and Supply Chains

U.S. President Donald Trump and Chinese President Xi Jinping are scheduled to meet in Beijing this week. The summit is expected to cover artificial intelligence competition, advanced chip sales, supply chain security, electric vehicle trade and rare earth minerals. Discussions co…